Laser Trail Tracker: Laser pointers tracking system with special emphasis on the shapes of a laser trails

Index

Background

Interactive techniques to improvise a live visual image are important for a stage performance. Nowadays using computers to generate realtime rendered computer graphics is very popular for those live performance, but the most of those systems are controlled via mice or keyboards, or something not better than a MIDI console, which are not attractive in front of a large screen.

The problem is that using such input devices in front of a large screen on the stage lacks a feeling of direct manipulation for a performer. Besides, for audiences, it is difficult to recognize the relationship between a body action of the performer and the result on the screen. In addition, it is not so entertaining to see shaking a mouse or tapping a key.

By contrast, let us consider a musician’s performance at a live stage. For example, body action of a guitarist (e.g. neck bending or wailing) itself is a performance, and the relationship between the action and the sound is clear for audiences.

On the other hand, in the contemporary computer music field, the lack of performance of “laptop music” becomse a problem too. To solve it, there is a conference titled“New Interface for Musical Expression (NIME)” and many artists, researchers and engineers are involved to it.

The goal of this research is to improve interaction part of live visual performance.

System Overview

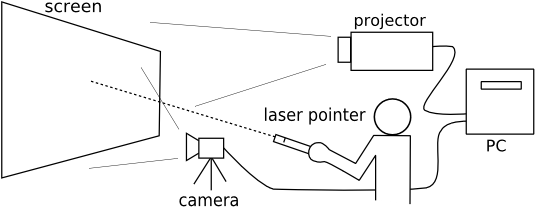

Our laser tracking system “Laser Trail Tracker (L.T.Tracker)” recognizes laser trails and thier motions drawn on a screen with laser pointers. When a visual reflecting those laser stroke is shown on the screen, it seems to be generated via direct interaction to the screen.

There is a camera in front of a screen to capture it. The system extracts laser trails from a camera image and track their motions by using simple image processing and recognition techniques. Finally, a visual processor makes a visual from those information and projects on the screen.

Features

There are many previous works on laser-based interaction, but our system is distinct from those systems in the following features.

- It employs very bright green laser pointers, that beam is highly visible for both performers and an audience.

- No restriction of visuals.

- Low latencey, high scan rate.

- Position tracking is very stable, even if a laser pointer is moved quickly.

- Trails of laser pointers can be used for generating visuals.

Samples

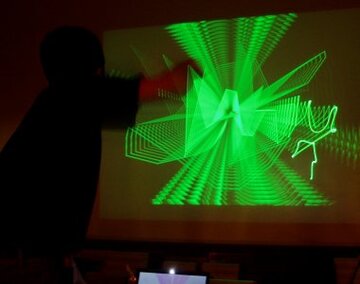

As seen in the figures, you can display the same color to the laser pointer on the screen. Of course, you can use other colors than green.

Application

Some records of the stage performances with this system are shown in this page in the works section.

Publications

-

"レーザーポインタの軌跡を用いた映像パフォーマンスの試み"

(PDF)

福地 健太郎: インタラクション2005論文集(情報処理学会シンポジウムシリーズ Vol. 2005, No. 4) pp. 63-64, 2005.3 (インタラクティブセッション) -

"A Laser Pointer/Laser Trails Tracking System for Visual Performance"

(PDF)

Kentaro Fukuchi: Human-Computer Interaction - INTERACT 2005 (LNCS3585) pp. 1050-1053, 2005.9 -

"レーザポインタの軌跡を追跡する映像パフォーマンス向け遠隔入力システム"

福地 健太郎: 情報処理学会論文誌 Vol.49 No.7 pp. 2712-2721, 2008.7